Hallucinations as a new(ish) threat model for academics

arxiv clamps down

As academics, our central remit in writing papers has always been to cite the work that came before ours, to give context to our findings, to acknowledge prior art, and to engage deeply with the knowledge corpus. Omission of relevant citations has many origins, from the benign, to the lazy, to the pernicious. Most academics know of some people in their field who systematically under cite and for those people, it’s not a good look. The norms of academia (feedback on public preprints, peer review, accountability during promotion, etc.) are supposed to correct for the under-citation problem. But it’s always been an imperfect system and we’ve gotten used to it.

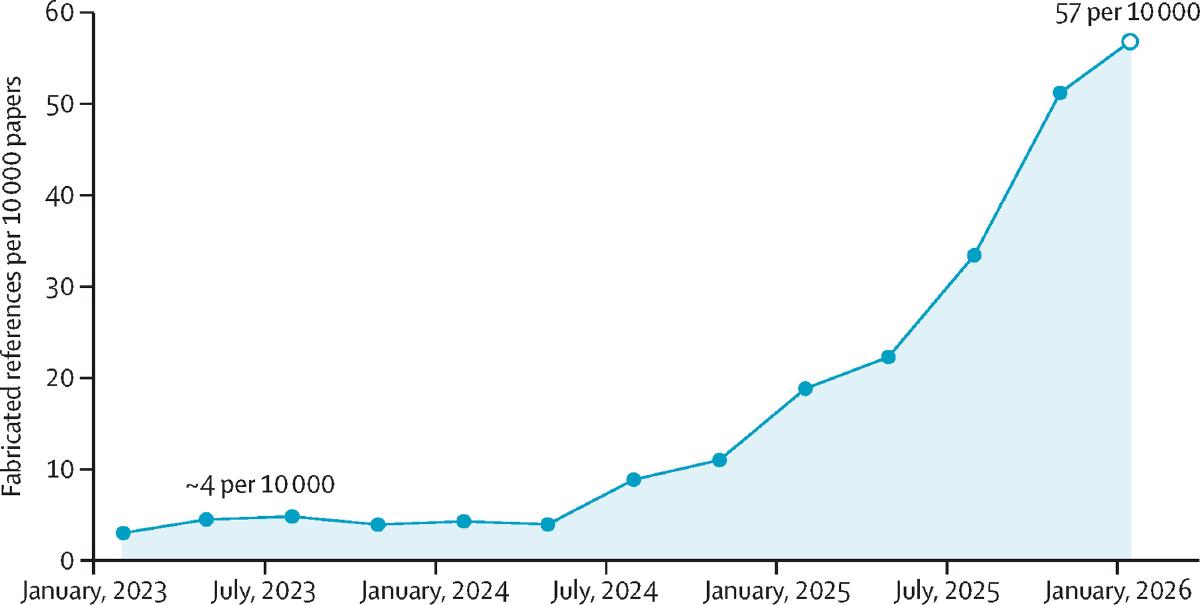

Now, as academics are starting to use LLMs not only to accelerate the ideation phases of research but for the paper writing process itself, a new avenue of trouble is arising en masse and at scale: the COmmission of fabricated citations. When people do this, to support a claim in their work e.g., it’s considered fraud. When LLMs do it, we call it hallucinations. The work of Topaz et al. published last week (Fig. 1) makes clear that fabricated citations have been steadily increasing since frontier models started gaining more adoption. In March, Adam Ruben explored and explained some of the origins of why LLMs might hallucinate and why it’s challenging for us humans to catch all such errors.

LLM-hallucinated citations have been a concern by those paying attention for years. And like citation omission, the commission of LLM-hallucinated citations were likely just viewed as a new occupational hazard, becoming yet another part of the imperfect system.

Then, yesterday, Thomas Dietterich a moderator and principal at the premier preprint server arXiv made a startling announcement: hallucinated citations would not only not be tolerated, if an author is caught submitting a paper with a hallucinated citation they will be banned for 1 year from submitting to arXiv. The existence of hallucinations, reckons arXiv, is a red-flag indicator that a human author did not actually write, read, and/or verify the context they signed their name to. As submissions to arXiv are a critical venue for academics to present their work, such a banishment would severely hamper an academic’s ability to perform a basic facet of our work. Letting an LLM hallucinate on your behalf can now lead to a serious and consequential blow to your reputation and, by extension, your livelihood. Hallucinations aren’t just an academic annoyance, they are a new threat vector.

Hallucinations are one of the problems in the AI/science space I’ve been sitting with and concerned about for the past year. So, I wanted to build something to help. Last week my company announced Valency Bond, in limited preview, to give LLMs access to a real corpus of 50 million+ academic papers and preprints. While the use cases for Valency Bond are wide, we’ve found that frontier LLMs connected to Valency Bond become more tethered to the relevant work and those works are surfaced as real citations to the researcher interacting with the LLM. We still need to sign off on the work we submit but my hope is that products like Valency Bond will help tamp down this new threat vector and unlock our ability as academics to accelerate our research with AI.

References

Adam Ruben “Cite unseen: when AI hallucinates scientific articles” (Science, 0.1126/science.zfae4z1; 30 Mar 2026)

Maxim Topaz, Nir Roguin, Pallavi Gupta, Zhihong Zhang, Laura-Maria Peltonen “Fabricated citations: an audit across 2.5 million biomedical papers” (Lancet Volume 407, Issue 10541 p1779-1781; 9 May 2026)